Do you avoid reviewing performance without metrics?

Last updated by Yazhi Chen [SSW] about 2 months ago.See historyIf a client says:

"This application is too slow, I don't really want to put up with such poor performance. Please fix."

We don't jump in and look at the code and clean it up and reply with something like:

"I've looked at the code and cleaned it up - not sure if this is suitable - please tell me if you are OK with the performance now."

A better way is:

- Ask the client to tell us how slow it is (in seconds) and how fast they ideally would like it (in seconds)

- Add some code to record the time the function takes to run

- Reproduce the steps and record the time

- Change the code

- Reproduce the steps and record the time again

- Reply to the customer: "It was 22 seconds, you asked for around 10 seconds. It is now 8 seconds."

Also, never forget to do incremental changes in your tests!

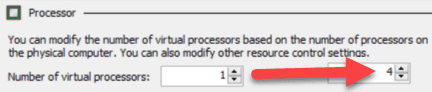

For example, if you are trying to measure the optimal number of processors for a server, do not go from 1 processor to 4 processors at once:

Do it incrementally, adding 1 processor each time, measuring the results, and then adding more:

This gives you the most complete set of data to work from.

This is because performance is an emotional thing, sometimes it just *feels* slower. Without numbers, a person cannot really know for sure whether something has become quicker. By making the changes incrementally, you can be assured that there aren’t bad changes canceling out the effect of good changes.

Samples

For sample code on how to measure performance, please refer to rule Do you have tests for Performance? on Rules To Better Unit Tests.